How High-Quality Traffic Bots Can Help Your Website Rank Higher?

Web traffic bots are instrumental in modern-day SEO and digital marketing as a whole. Google and other search engines use them to find and index website content. Shortly, your web page will see increased website traffic and go up on search engine result pages (SERPs).

An important aspect of search engine optimization is how often website traffic generators crawl your website content and send unlimited automated traffic to your website, which ultimately boosts your online presence.

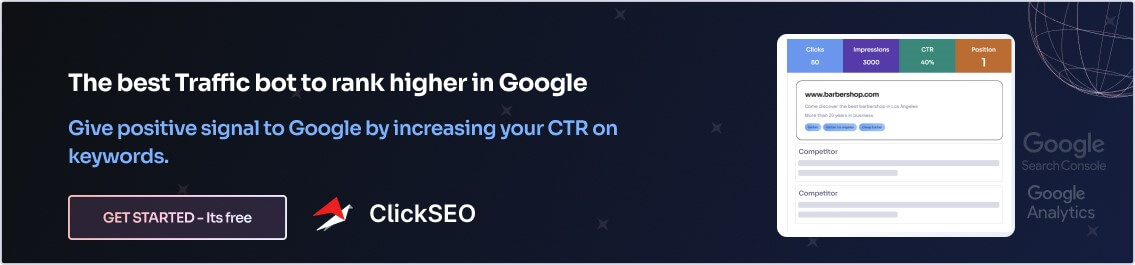

In this article, we will discuss traffic automation. Also, we will explore ways you can leverage bots to generate website traffic and improve your website ranking using a website traffic bot such as ClickSEO.

But first, let’s see how they work and influence optimization.

What Is Bot Traffic and Why is it Important?

They are website visits that are generated by bots visiting the web instead of humans. It’s often employed by search engines and analytics platforms for indexing web pages and collating data. They are the opposite of human traffic or organic traffic and are also known as non-human traffic.

Bots can be good and bad, depending on their function. If used properly, these programs and scripts can crawl through your website and perform useful functions. This includes indexing your web pages, lowering the bounce rate on your site, and helping it rank higher on SERPs to outrank your competitors. In the same way, malicious ones can harm your SEO effort. As you will soon learn, there are effective ways to prevent this from happening.

Traffic Bot vs Bot Traffic

Many people use these two terms interchangeably. This is wrong because there is a clear distinction between them. Traffic bots are bots that send free non-human traffic to your site or web page. They do this by generating fake visits. They can be used for ethical purposes, like boosting your website, or for malicious purposes, such as click fraud.

On the other hand, bot traffic is any internet traffic generated from automated programs. This type of traffic is typically not associated with human users. And can usually be identified using sophisticated tools. Depending on the purpose of their generation, this traffic can either be legitimate or malicious. The hack is to encourage legitimate bots and prevent malicious ones.

What Are Bad Bots?

These are simply malicious bots designed specifically to serve nefarious purposes. They are involved in different activities that harm your website. Some examples of malicious use are as follows:

Scraping content: This is easily the most popular type on the internet. These bots work to scrape content from your website and republish it as their own without permission. Ultimately, your optimization efforts are put in jeopardy because of duplicate content.

Scraping email: Just like the previous one, this malicious bot scrapes email addresses from websites. It then proceeds to send spam emails to all of them.

DDoS attacks: DDoS stands for distributed denial of service. The way this one works is to launch attacks that overload your website with useless traffic. In no time, it causes your site to crash.

Spamming comments: These are spam bots that post spammy comments on blogs, forums, etc. Users who click on the links in these spam comments often end up on perilous websites.

Brute force attacks: Lastly, these are bots that attempt to guess the passwords for your website. Should you, for instance, have weak passwords, it can result in security breaches.

How to Reduce Bad Automated Website Traffic

Bad traffic can harm your website in several ways. That’s why it’s important to proactively prevent it. Here are some ways you can pull it off:

1. Install a security plugin

An effective security plugin can help you keep bad bots from accessing your website. What’s more, they can also identify what program is attacking your site and where they working from.

2. Add a CAPTCHA

A CAPTCHA is a way to ensure users on your website are actually humans. It works by insisting on activities that computer programs can’t perform, leaving only humans to browse the site. Adding a CAPTCHA to your website helps to reduce fake traffic, spam comments, and brute-force attacks.

3. Use a honeypot

A honeypot is essentially a trap for malicious bots. Adding a honeypot to your website will trick malicious tools to reveal themselves. This will enable you to block them. An example of a honeypot is a form field that’s obvious to bots but hidden from humans.

4. Restrict access to your site

Restricting access to your website will also go a long way to cutting down bad traffic. You can either password-protect certain pages or hire a special plugin like Cloudflare to block malicious IP addresses.

Note

Ensure that you don’t block the good while you are trying to keep out the bad.

Good Bots

These are web crawlers that generate useful traffic that helps your website. They are typically employed by platforms like Google to find and index new web pages. Good bots also go on to help your content get found and appear on top of SERPs. Besides the above-mentioned functions, these bots also serve other functions, which include:

Site-monitoring: These bots can ensure that your website’s uptime and performance are optimal. They also help you keep track of them. You should consider installing them on your website.

Analysis: Some good programs help you collect and gather information about your website’s traffic. They then display the traffic metric on the designated dashboard. This way, you consistently get a comprehensive understanding of your audience.

Social media management: With these good programs, you can make your social media marketing effort automatic. For instance, you can schedule your post on Facebook in advance.

Feed management: Just like with social media management, you can automatically share your content with subscribers via RSS feeds.

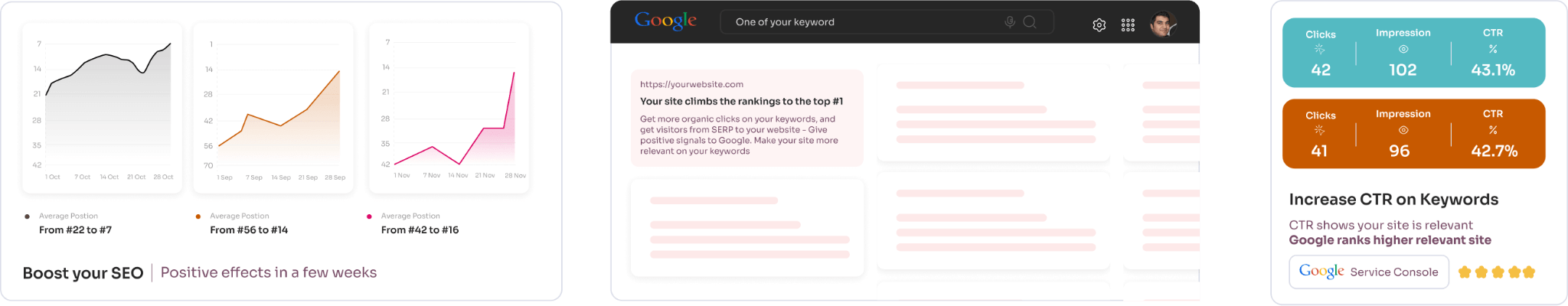

Organic Traffic bot: These are bots that are designed to increase the CTR of your keywords. In other words, organic clicks are generated from the search results to your website. As your website for a given keyword receives more clicks than your competitors, then Google understands that your site is the most relevant for that keyword, and increases SEO rankings.

How to Leverage Good Web Traffic for Your Website

In addition to blocking malicious web traffic, you can also leverage legitimate traffic to improve your website. The following are some things you can do:

1. Make your website bot-accessible

Ensure your web design is seamless and the code is well-done. Be especially mindful of how you use the robots.txt script. This is the code that tells programs what they can and can’t index on your website.

2. Take advantage of sitemaps

A sitemap is a file that details all the pages available on your website. Adding a sitemap to your site allows good bots to easily index your website. As soon as you create your sitemap, submit it to Google Search Console.

3. Use structured data

Structured data is a code that helps search engines understand the content on your website. It is also known as schema markup. Several tools are available to create and add structured data to your website. A good example is Google’s Structured Data Markup Helper.

4. Regularly update your web content

Content remains the #1 way to find and index your website. Regularly add relevant, useful content to your website.

Boost Your Website with the Right Strategy

Non-human traffic can help boost your website’s popularity and increase your business’ revenue. But only if you use the right internet bots. ClickSEO is one such too. By using ClickSEO you achieve three things. One, you increase your site’s click-through rate (CTR). Two, you reduce the bounce rate. And lastly, you push web pages higher on SERPs.

ClickSEO is one of the best traffic bot to generate high-quality organic visits to your website using 4G and residential IPs. All clicks are recorded in the Google Search Console, and Google analytics. Using this tool can definitely helps your website get ranked higher, and quicker.

3 days free trial – ClickSEO Traffic bot

Really nice to read this kind of Interesting Article. Nice Post.

verywell explend content on seo

nice explanation on algorithem update